With a successful EAGE Digital behind us, we could not miss our appointment with the EAGE AI Committee. This month we are focusing on subsurface numerical modelling. Stay tuned for Part 2!

As a group of EAGE members and volunteers, the EAGE A.I. Committee is dedicated to helping you navigate the digital world and finding the bits that are most relevant to geoscientists.

You are welcome to join EAGE or renew your membership to support the work of the EAGE A.I. Community and access all the benefits offered by the Association.

EAGE Membership Benefits: Join or Renew

Curious to know all EAGE is doing for the digital transformation?

Visit the EAGE Digitalization Hub

![]()

AI in Subsurface Numerical Modelling: An Overview / Part 1

By Cédric M. John

In this two parts newsletter, we will focus on one particular area of AI: surrogate models, or how AI can help improve scientific computation and subsurface modelling. Whilst the general public is now well aware of the use of AI in image recognition, text-to-speech and speech-to-text translation, and recently the abilities of large language models such as ChatGPT to generate text. Comparatively much less is known about surrogate models. And yet, as geoscientists, this is an area of AI worth keeping our eyes on as it yields great promises. This piece scratches the surface of a fascinating topic, and is meant to be a cursory introduction to the approach and by no means an exhaustive account.

![]()

Background: the Pain-Points of Numerical Simulations

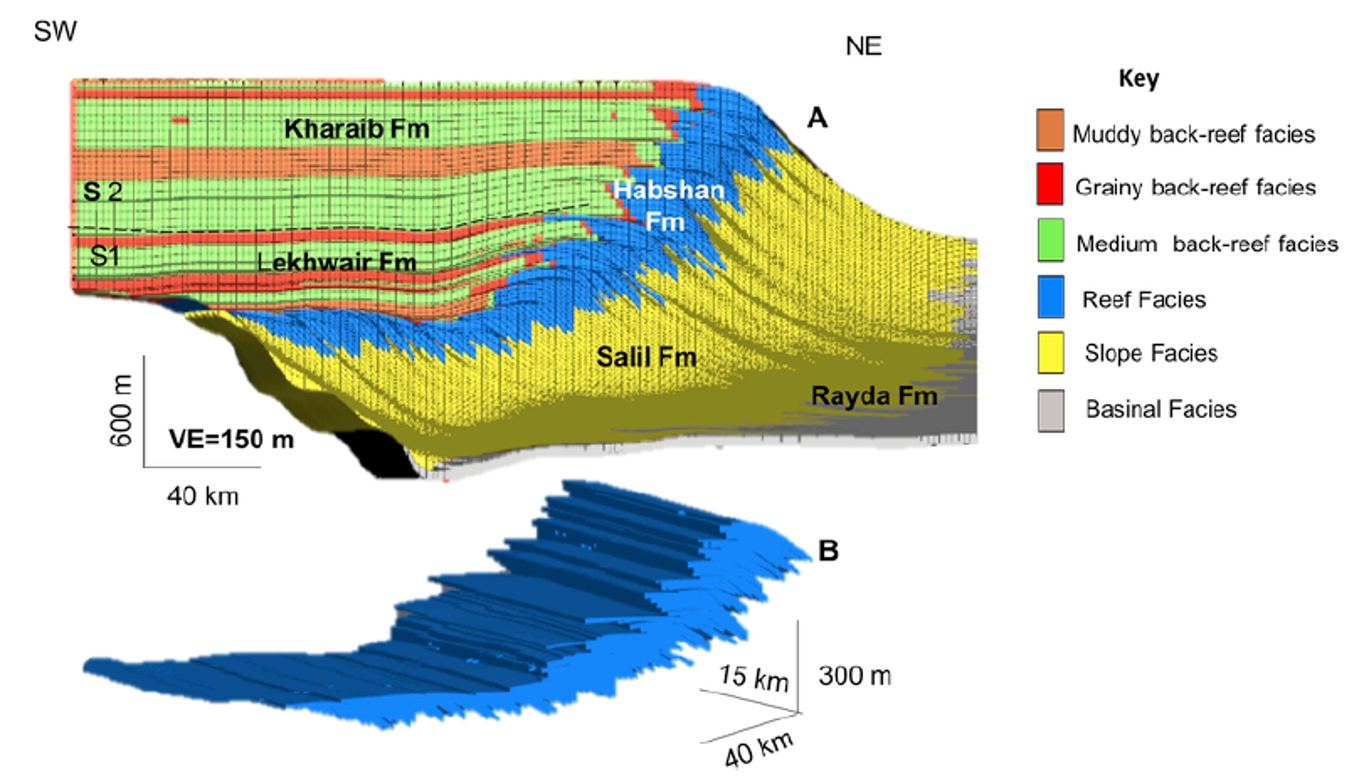

Here, we will refer to “numerical simulation” as those computer simulation that explicitly solve an equation of state, often via stochastic partial differential equations (or PDEs). This will be opposed to data-driven simulation, i.e. AI driven simulations. Numerical simulations are a great way for geoscientists to constrain the subsurface. The general principle is to encapsulate physics governing equations and solve for them in space (usually on a grid) and often in time (i.e. forward or backward in time). The physics that is incorporated can be very close to the exact physics of the system (for instance, when modelling multi-phase fluid flow in porous media, or fluid dynamics of air, ocean current or streams), although often it is an approximation of physics that is incorporated (for instance, sediment transport at geological timescales is often estimated using simplified diffusion equations, Figure 1).

Figure 1: Forward stratigraphic models are good examples of subsurface models that require a stepwise approach, and multi-realisations to capture subsurface uncertainty. In this example from the subsurface of the Middle East, sediment transport was approximated by diffusion. Source: Marya Al-Salmi, Cedric M. John, Nicolas Hawie, 2019, Quantitative controls on the regional geometries and heterogeneities of the Rayda to Shu’aiba formations (Northern Oman) using forward stratigraphic modelling, Marine and Petroleum Geology, Volume 99, January 2019, Pages 45-60

The problem with the full-physics approach is that it is computationally very expensive, even when simplified equations are used. The need for large computation power is aggravated by the fact that most numerical simulation software run on expensive CPUs, not the cheaper GPUs used for machine learning. When you add to that the inherent uncertainty related to subsurface geology and thus the need for multi-models realizations to capture uncertainty, the longer computation time needed means making sacrifices in terms of number of iterations, size of the domain being modelled, or grid size. This issue can even limit the types of geological problems that can be tackled by numerical methods.

A recent research trend is thus to incorporate AI in the simulation loop, either as a replacement for numeral models, or as a surrogate for the full-physics model. The rational is that although it takes a large computational cost to train machine learning models, once trained their inference time (i.e. the time it takes to compute a new realization) is very short. A trained machine learning model will thus be able to generate numerical model realizations at a fraction of the time needed by the numerical solution.

![]()

![]()

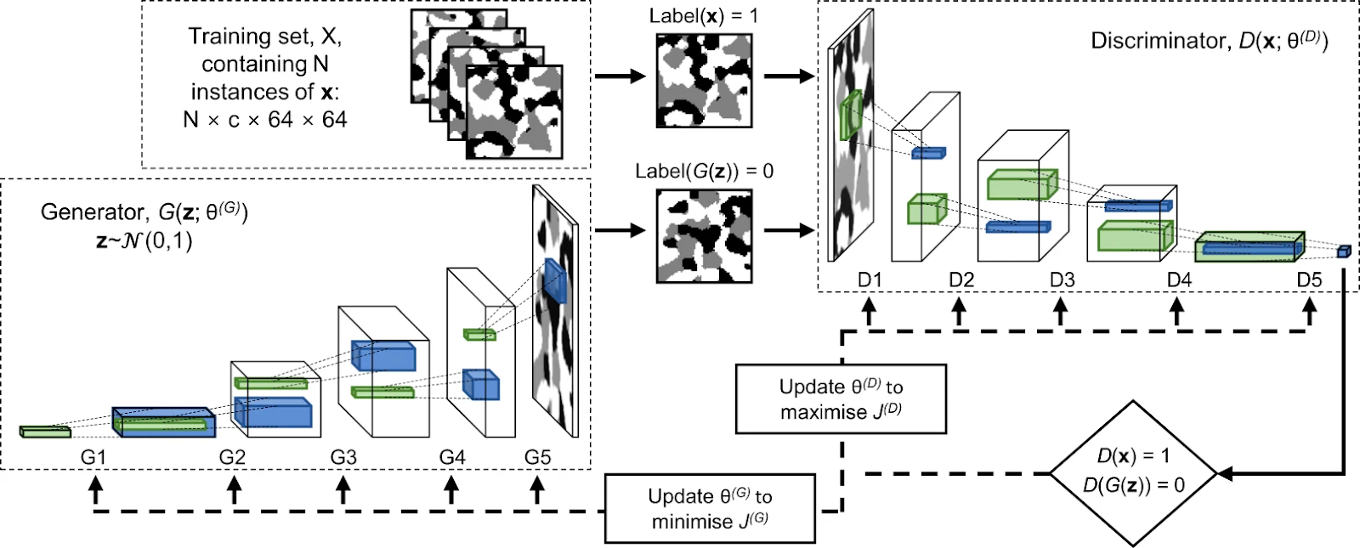

Using Generative Models as “geostatistical” models

Generative models have been at the forefront of the new AI wave of the last two years. The new approach to generating images (OpenAI Dall-E) or text (OpenAI ChatGPT) is ‘stable diffusion’, but older and easier to train approaches also exist. Notably, Generative-Adversarial Networks (or “GANs”) have been around for several years. The basic principle of GANs is that two models are trained in parallel: one (the generator) is tasked with generating a fake image (or data) based on initial noise. The second model (the discriminator) is tasked with classifying the image as either a real image, or a fake image (Figure 2).

Figure 2: Example of a GAN architecture used to generate pore volumes in 3 dimension, source: Andrea Gayon-Lombardo, Lukas Mosser, Nigel P. Brandon & Samuel J. Cooper, 2020, Pores for thought: generative adversarial networks for stochastic reconstruction of 3D multi-phase electrode microstructures with periodic boundaries, npj Computational Materials, volume 6, Article number: 82

Because the discriminator accesses the images in the training set to evaluate the generated one, the generator model will be pushed towards creating new samples that conform to the statistical distribution of the training set. Therefore, GANs and similar deep-learning approaches, once trained, offer a very computationally efficient way to perform geostatistical simulations. To generate a new image, noise data is provided as input to the generator. This noise can be tweaked to incorporate the parameters of the model, thus conditioning the output to desirable model parameters.

This approach has been used for instance in generating realistic pore volumes for fluid flow simulation (see figure 2, Gayon-Lombardo et al, 2020), but also to model subsurface reservoir facies distribution (Chen et al, 2022, Zhang et al, 2021).

Conclusion to Part 1

GANs are very useful to produce realistic-looking renditions of the subsurface. They can incorporate calibration constraints (at least to some extent), and are very fast. However, they do not replace forward (or backward) models such as the one presented in Figure 1, and are not incorporating any physics constraints. We will see next month in Part 2 of this Newsletter that there are some AI models that open up this possibility

![]()

Did you miss EAGE Digital last week? Dive into EarthDoc to read the abstracts presented at the conference!

And if you are looking for more opportunities in the world of digitalization, here are some of the next projects you can join via EAGE:

- Data Science for Geoscience, an extensive interactive online course by Jef Caers (University of Stanford) starting on 11 April

- Seventh EAGE High Performance Computing Workshop: the call for abstracts is open until 20 May

- Annual Hackathon – Natural Language Processing on 4-5 June. Join the challenge!

This newsletter is edited by the EAGE A.I. Committee.

| Name | Company / Institution | Country |

|---|---|---|

| Anna Dubovik | WAIW | United Arab Emirates |

| Jan H. van de Mortel | Independent | Netherlands |

| Jing Sun | TU Delft | Netherlands |

| Julio Cárdenas | Géolithe | France |

| George Ghon | Capgemini | Norway |

| Lukas Mosser | Aker BP | Norway |

| Oleg Ovcharenko | NVIDIA | United Arab Emirates |

| Roderick Perez | OMV | Austria |

| Surender Manral | Schlumberger | Norway |

| Yohanes Nuwara | Aker BP | Norway |